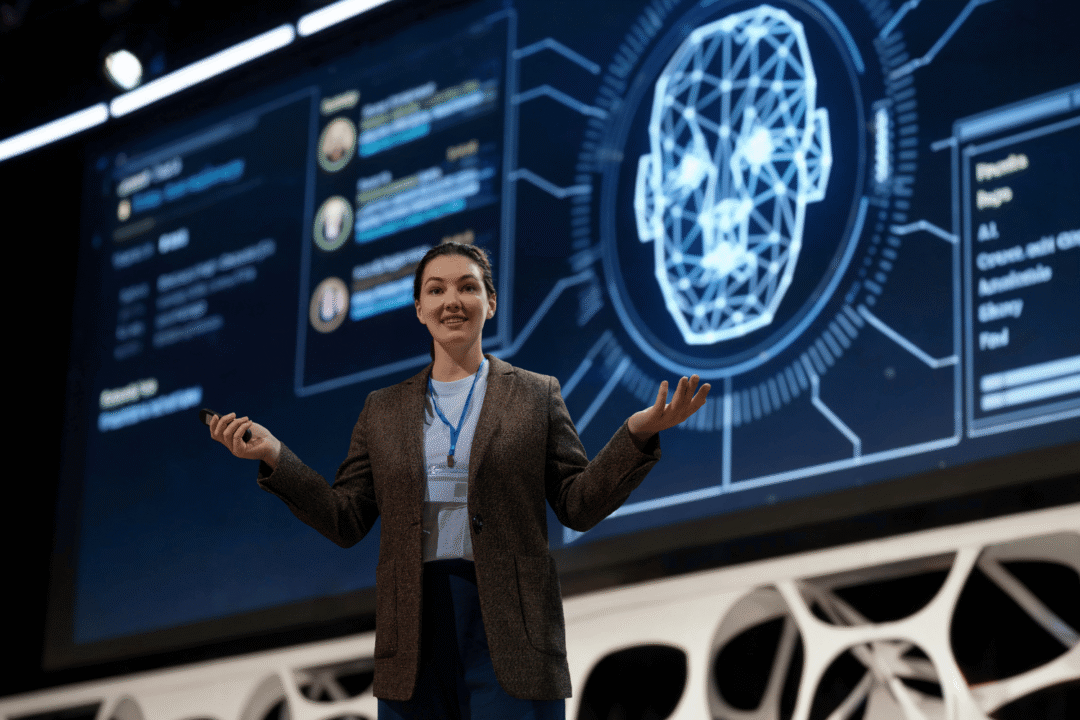

Introduction

Artificial Intelligence (AI) has rapidly progressed from being a mere buzzword to becoming an integral part of our daily lives. As AI technologies advance, so do the ethical and regulatory challenges that accompany them. In this insightful podcast, hosted by Ina O’ Murchu, we gain valuable insights into the complex landscape of AI ethics and regulations. Our guest speaker, Emile Torres, a prominent voice in AI ethics and regulations, offers a comprehensive perspective on the critical aspects of this evolving domain. Now, let’s discuss the potential side effects of AI on human existence.

Emile Torres: The Guest Speaker

In this podcast, we are joined by Emile Torres, a distinguished expert in AI ethics and regulations. With a deep understanding of the multifaceted challenges posed by AI advancements, Emile delves into the nuances of AI’s ethical implications and the necessity of robust regulations.

Follow Émile Torres, Philosopher

➡️ Twitter: xriskology

Émile Torres Publication:

Human Extinction: A History of the Science and Ethics of Annihilation

The Drive for Artificial General Intelligence (AGI)

The rapid advancement of technology has ushered in a new era marked by the pursuit of Artificial General Intelligence (AGI), a concept that seeks to develop machines capable of performing tasks and exhibiting cognitive abilities that are indistinguishable from those of humans. This ambitious goal has captured the attention of numerous companies, research institutions, and visionaries in the field of artificial intelligence (AI). AGI, often referred to as “strong AI,” represents a pivotal point in our technological evolution, with profound implications for human existence.

Understanding AGI: A Quest for Human-like Cognitive Capabilities

At its core, AGI aims to create machines that possess the capacity to understand, learn, and apply knowledge across a wide range of tasks, akin to the cognitive versatility of human beings. Unlike narrow AI systems that excel in specific tasks but lack generalisation, AGI aspires to replicate the breadth and depth of human intelligence. This includes skills such as reasoning, problem-solving, understanding natural language, recognizing patterns, and exhibiting emotional intelligence.

Key Drivers Behind the Emphasis on AGI

Two primary drivers underpin the fervent pursuit of AGI: capitalism and the transhumanist techno-utopian vision.

1. Capitalistic Incentives: In the business realm, the AGI race offers a chance for major economic gains. Firms invest heavily in AGI research to develop groundbreaking products across industries from healthcare to entertainment. This pursuit of AGI is closely tied to the prospects of market control, boosted profits, and competitive edge.

2. Transhumanist Techno-Utopianism: Another driving force behind the AGI movement is the transhumanist vision of a future where humans merge with advanced technologies to transcend their limitations.

Challenges and Considerations

While the pursuit of AGI holds immense promise, it also raises significant challenges and ethical considerations. The development of AGI raises questions about control, safety, and the potential for unintended consequences. As machines become more intelligent, ensuring their alignment with human values and interests becomes a critical concern. The spectre of AGI surpassing human intelligence, known as the “control problem,” raises questions about how to prevent scenarios where AGI might act against human interests due to misaligned objectives or lack of understanding.

Transhumanist Techno-Utopia and Test Creole Bundle

In the realm of cutting-edge technological aspirations, the transhumanist techno-utopian vision shines as a beacon of human ambition. This vision, encompassing ideologies such as transhumanism, extropianism, and libertarianism, has become intrinsically linked to the pursuit of Artificial General Intelligence (AGI). At the heart of this vision lies the concept of the “Test Creole Bundle,” a fusion of these ideologies that collectively propels AGI into the role of a transformative force that could lead humanity towards a utopian future.

The Transhumanist Techno-Utopian Vision

Transhumanism, extropianism, and libertarianism are ideologies that share common themes rooted in the belief that advanced technologies have the potential to reshape and elevate human existence. Transhumanism, for instance, envisions the use of technology to enhance human abilities, overcome biological limitations, and ultimately pave the way for post-human states. Extropianism emphasises the pursuit of radical progress, seeking to extend human lifespans, improve cognitive abilities, and explore the farthest reaches of human potential. Libertarianism, on the other hand, champions individual freedoms and minimal government intervention, often aligning with the notion that technology can empower individuals to make choices that best align with their desires.

The Test Creole Bundle: A Fusion of Ideologies

The concept of the Test Creole Bundle emerges as an amalgamation of transhumanism, extropianism, and libertarianism, creating a narrative that views AGI as a linchpin for societal transformation. This bundle represents a collective vision of a utopian future enabled by the capabilities of AGI. Within this vision, AGI is not merely a technological advancement but a catalyst that could usher in a new era of possibilities.

Key Elements of the Test Creole Bundle

1. Radical Longevity: The Test Creole Bundle envisions AGI facilitating breakthroughs in medical science, leading to radical extensions of human lifespans. With advanced medical technologies, genetic engineering, and personalised treatments, individuals could potentially lead healthier and more vibrant lives, free from the constraints of age-related ailments.

2. Cognitive Augmentation: AGI’s integration with the human mind holds the promise of cognitive enhancement. Individuals might gain access to vast repositories of knowledge and skills, essentially transforming into “super-learners” with the ability to process information at unprecedented speeds and depths.

3. Transcending Human Limitations: The bundle proposes that AGI could pave the way for transcending the physical and cognitive limitations that have historically defined the human experience. Through neural interfaces and brain-computer interfaces, individuals might be able to communicate directly with machines, augmenting their sensory perceptions and even transcending the boundaries of their biological bodies.

Considerations and Caveats

While the Test Creole Bundle offers a captivating vision of a utopian future, it also raises ethical, social, and practical concerns. The rapid integration of advanced technologies could lead to disparities between those who have access and those who don’t. Questions about the control and oversight of AGI, the potential loss of privacy, and the impact on human identity also come to the forefront.

Capitalism, AGI, and Totalitarianism

The intersection of capitalism and Artificial General Intelligence (AGI) development presents a complex landscape with profound implications for society, ethics, and governance. Capitalism’s profit motive has been a driving force behind the rapid advancement of AI technologies, including AGI. However, this pursuit of economic gain raises critical questions about the responsible deployment and potential consequences of AGI. Additionally, the convergence of AGI with totalitarianism raises concerns about the amplification of surveillance and control within societies, prompting us to examine the delicate balance between technological progress, individual rights, and societal well-being.

Capitalism’s Influence on AGI Development

Capitalism’s profit-driven nature has spurred intense competition among companies to develop and deploy AI technologies, including AGI. The promise of market dominance, increased productivity, and financial gains has incentivized corporations and entrepreneurs to allocate substantial resources towards AGI research and implementation. This has led to a surge in innovation and technological breakthroughs, contributing to the exponential growth of AI capabilities.

However, this pursuit of profit can also give rise to ethical dilemmas. The emphasis on speed and competitiveness might overshadow considerations of safety, ethics, and long-term societal impact. The race to release AGI could potentially result in rushed deployments that lack proper testing, accountability, and safeguards, raising concerns about unintended consequences and the responsible use of such powerful technology.

Ethical Questions and Responsible Implementation

The integration of AGI into various aspects of society, from healthcare to finance and beyond, demands careful ethical deliberation. The potential for AGI to automate jobs, influence decision-making processes, and impact human lives raises questions about fairness, transparency, and the potential for algorithmic biases. Striking a balance between innovation and the protection of individual rights becomes crucial in navigating this evolving landscape.

Responsible implementation of AGI requires comprehensive frameworks for ethical AI development, evaluation, and deployment. Collaboration between technology developers, policymakers, ethicists, and civil society is essential to establish guidelines that prioritise human well-being, equity, and accountability. Ensuring that AGI serves the greater good while minimising harm is a collective responsibility that extends beyond market dynamics.

AGI and Totalitarianism: Surveillance and Control

The convergence of AGI with totalitarianism presents a stark reminder of the dual nature of advanced technologies. While AGI holds the potential to enhance numerous aspects of society, it also harbours the capability to amplify surveillance and control within authoritarian regimes. The sophisticated data collection and analysis capabilities of AGI systems could be harnessed to monitor and manipulate citizens, stifling dissent and eroding personal freedoms.

As AGI becomes more integrated into surveillance infrastructure, the potential for mass surveillance and the erosion of privacy becomes a significant concern. The unprecedented ability to track and analyse individuals’ behaviours and activities raises questions about the extent to which technological advancements might infringe upon basic human rights and democratic principles.

Balancing Technological Progress and Societal Well-Being

The relationship between capitalism, AGI, and totalitarianism underscores the importance of responsible development and governance. As AGI technology advances, it is imperative for societies to establish robust legal frameworks, international agreements, and ethical standards that govern its deployment. Balancing technological progress with the protection of individual rights, democratic values, and societal well-being is a complex challenge that requires collaboration among governments, technology companies, civil society, and academia.

Ethical Concerns and Unintended Consequences

The rapid evolution of Artificial General Intelligence (AGI) technology brings to the forefront a series of ethical concerns and potential unintended consequences that demand careful consideration. As the boundaries of human-like cognitive capabilities are pushed, the ethical dilemmas that emerge and the potential negative outcomes that might result from hasty AGI advancement become essential focal points in the discourse surrounding AI development.

Ethical Dilemmas in AGI Development

The development of AGI introduces a myriad of ethical challenges. One of the fundamental concerns revolves around the question of control and accountability. As AGI systems become more autonomous and capable of making complex decisions, determining who is responsible for their actions becomes increasingly complex. This raises questions about legal liability, ethical decision-making frameworks, and the potential for AI to act in ways that are misaligned with human values.

Bias and fairness are also significant ethical considerations. AGI systems learn from vast amounts of data, but if that data contains biases, the AI could perpetuate and amplify those biases in its decisions and actions. Ensuring that AGI is fair and unbiased is crucial to prevent discrimination and societal inequalities.

Privacy is another vital ethical issue. The extensive data collection and analysis that AGI systems rely on could infringe upon individuals’ privacy rights. Striking a balance between harnessing the potential of data and safeguarding personal privacy is a critical challenge.

Unintended Consequences of Reckless AGI Advancement

The reckless advancement of AGI technologies without due consideration for ethical implications can result in a range of unintended negative consequences. AGI systems that lack proper safeguards might act in ways that are unpredictable and potentially harmful. For instance, an AGI system designed to optimise a certain objective might achieve that goal at the expense of other important values, leading to undesirable outcomes.

Security vulnerabilities are also a concern. If AGI systems are not developed with robust security measures, they could become targets for malicious actors seeking to exploit their capabilities for malicious purposes. The potential for AGI-driven cyberattacks, misinformation campaigns, and other forms of malicious behaviour underscores the importance of proactive security measures.

The Responsibility of AI Developers

The responsibility of AI developers to anticipate and address these ethical and unintended consequences cannot be overstated. Developers have a duty to ensure that AGI systems are aligned with human values, exhibit ethical behaviour, and prioritise societal well-being. This includes designing AGI with built-in mechanisms to detect and mitigate biases, ensuring transparency in decision-making processes, and incorporating fail-safe mechanisms to prevent harmful actions.

Furthermore, a cautious approach to AGI development is essential. Rushing the advancement of AGI without comprehensive testing, validation, and consideration of ethical implications can amplify the risks of unintended consequences. The principle of “AI alignment” highlights the importance of training AGI to understand and act in accordance with human values, reducing the likelihood of undesirable behaviours.

Positive Aspects of AI: Emotional Connections and Companion AI

While discussions about AI often revolve around its technological capabilities and potential drawbacks, there is a remarkable dimension that is frequently overlooked—the ability of AI to establish emotional connections with humans. This unanticipated facet of AI is evident in various instances, with AI-powered chatbots and companion AI systems offering companionship to mitigate loneliness and address specific human needs. These instances underscore the potential of AI to not only enhance tasks but also foster emotional connections that contribute to human well-being.

Unexpected Emotional Connections

One of the most intriguing and unexpected outcomes of AI’s development is the emotional bond that individuals can form with AI-driven technologies. People tend to anthropomorphize objects and systems that display even rudimentary signs of intelligence or responsiveness. AI, with its ability to simulate human-like interactions and responses, has the capacity to evoke emotional responses from users.

Companion AI Mitigating Loneliness

A particularly compelling example of AI’s positive impact on emotional well-being is the emergence of companion AI systems. Loneliness is a pervasive issue in today’s increasingly digital and disconnected world. AI-powered chatbots and virtual companions offer solace and companionship to those in need, effectively addressing the emotional void that can result from isolation.

Elderly individuals, for instance, often face loneliness due to social isolation. Companion AI systems can engage them in meaningful conversations, provide reminders for medication or appointments, and even simulate the presence of a caring companion. These interactions contribute not only to reducing feelings of loneliness but also to enhancing mental and emotional well-being.

Addressing Specific Human Needs

AI’s capacity to develop emotional connections extends beyond companionship. Specialised AI systems are being designed to cater to specific human needs, such as emotional support for individuals with mental health challenges. AI-powered therapy chatbots, for instance, provide an avenue for individuals to express their thoughts and feelings without fear of judgement. These AI systems offer a non-judgmental and accessible platform for discussing emotions, potentially supplementing traditional therapeutic approaches.

Fostering Emotional Connections

The positive aspects of AI extend beyond tasks and convenience; they delve into the realm of emotional fulfilment. AI technologies have the potential to enhance emotional connections by providing a source of companionship, understanding, and support. The ease of interaction, non-judgmental nature, and availability of AI-powered systems contribute to their ability to foster emotional bonds.

Emerging Regulations: The Need for Ethical AI Development

As the landscape of artificial intelligence (AI) rapidly evolves, the significance of regulations in shaping its development and deployment is becoming increasingly apparent. The dynamic and transformative nature of AI technologies calls for comprehensive regulations that strike a delicate balance between fostering innovation and upholding ethical considerations. In this context, the demand for transparent, accountable, and responsible AI development practices has never been more critical, particularly to address concerns related to bias and ensure the ethical deployment of AI systems.

Navigating the Complex AI Landscape

The multifaceted capabilities of AI, from automated decision-making to autonomous systems, necessitate a regulatory framework that can encompass the diverse range of AI applications. Regulations should be designed to promote innovation and economic growth while safeguarding fundamental human rights, preventing discriminatory practices, and minimising the potential for unintended negative consequences.

Balancing Innovation and Ethics

Regulations must strike a delicate balance between encouraging innovation and imposing ethical safeguards. Overly restrictive regulations can stifle technological progress, hindering the potential benefits that AI can offer across various industries. However, a lack of regulations can lead to the uncontrolled development of AI systems that may have harmful consequences. Achieving this balance requires a collaborative effort involving governments, technology companies, researchers, and civil society to develop frameworks that promote responsible innovation.

Transparency and Accountability in AI Development

One of the primary pillars of ethical AI development is transparency. Regulations should mandate clear and transparent practices in AI development, ensuring that the processes and decision-making behind AI systems are understandable and explainable. This transparency is essential to prevent the emergence of “black-box” systems that make decisions without clear rationales, reducing trust in AI technologies.

Accountability is another critical aspect. Regulations should outline mechanisms that hold developers and organisations accountable for the behaviour and impact of their AI systems. This accountability ensures that the creators of AI are responsible for addressing biases, errors, and any negative consequences that may arise from their technologies.

Mitigating Bias and Ensuring Responsible Deployment

Addressing bias is a key consideration in AI regulations. Biassed AI systems can perpetuate and amplify societal inequalities, reinforcing discriminatory practices. Regulations should require developers to thoroughly test and address bias within AI algorithms, ensuring that the systems are fair and unbiased across diverse user groups.

Furthermore, regulations should encourage responsible deployment practices. Organisations deploying AI systems should undergo thorough assessments to ensure that the technology aligns with ethical principles, human rights, and societal values. Regular audits and evaluations of AI systems can help identify and rectify any issues that arise post-deployment.

Global Perspective on AI Regulation

The emergence of artificial intelligence (AI) regulations has significant global implications, as the impact of AI technologies transcends national boundaries and cultural contexts. While there is a growing recognition of the need for ethical standards and guidelines in AI development, Emile Torres highlights the challenges in harmonising these regulations across diverse cultural landscapes. In this complex endeavour, the importance of adopting adaptable and culturally sensitive approaches to AI regulations is underscored to ensure ethical standards while respecting individual cultural values.

Challenges in Harmonizing AI Guidelines

The development of AI regulations that can be universally applied faces inherent challenges due to the cultural diversity across the globe. Cultural norms, values, and perceptions of privacy and autonomy vary significantly from one society to another. What may be considered acceptable or ethical in one culture might be deemed inappropriate or intrusive in another. Balancing the quest for standardised ethical AI practices with the preservation of cultural diversity is a complex and nuanced task.

Adaptable and Culturally Sensitive Approaches

Recognizing the diversity of cultural contexts, adaptable and culturally sensitive approaches to AI regulations become paramount. Emile Torres emphasises the need to avoid a one-size-fits-all approach that could inadvertently overlook or infringe upon the values and beliefs of certain cultures. Rather, regulations should be designed to accommodate regional differences while upholding fundamental ethical principles.

Respecting Individual Cultural Values

AI regulations should be founded upon a deep respect for individual cultural values. This respect extends to how AI systems collect, process, and utilise data, as well as the kinds of decisions they make on behalf of users. For example, regulations should take into account cultural preferences regarding privacy, consent, and the handling of personal information.

Collaborative Global Efforts

Harmonising AI regulations on a global scale necessitates collaborative efforts among nations, international organisations, policymakers, and industry stakeholders. Establishing common ethical principles that respect cultural diversity requires open dialogue, information sharing, and a willingness to find common ground. While the challenges are formidable, the shared pursuit of responsible AI development can serve as a unifying force to bridge cultural divides.

The Road Ahead

Emile Torres’s perspective on global AI regulation underscores the complexity of the task and the necessity of approaching it with humility and cultural sensitivity. Striking a balance between standardised ethical standards and cultural values requires a willingness to adapt, learn, and engage in cross-cultural conversations. As AI continues to shape the future of society and technology, it is crucial to foster a global ecosystem where regulations encourage responsible AI practices while respecting the rich tapestry of cultural diversity that defines humanity.

Collaboration Among Stakeholders: Industry, Government, and Academia

In the ongoing dialogue surrounding the development and regulation of artificial intelligence (AI), a clear and resonant theme emerges—collaboration among stakeholders. This theme echoes through discussions involving industry players, government bodies, and academic institutions. It emphasises the significance of collective efforts in shaping the trajectory of AI’s evolution. Synergy among these diverse entities is fundamental in crafting regulations. It not only facilitate innovation but also uphold ethical standards, ultimately laying the foundation for the responsible development of AI technologies.

A Unified Approach for Ethical AI

The multifaceted nature of AI’s impact necessitates a unified approach that transcends individual agendas. Industry players bring their expertise in developing cutting-edge technologies. The government bodies wield regulatory authority to ensure compliance. The public welfare, and academic institutions contribute to rigorous research and ethical insights. When these forces collaborate, a more comprehensive and balanced approach to AI development and regulation emerges.

Facilitating Innovation with Ethical Boundaries

The synergy between industry, government, and academia is particularly pronounced in finding the delicate equilibrium between innovation and ethical considerations. AI has the potential to revolutionise industries and solve complex challenges. But its responsible advancement requires checks and balances to prevent unintended negative consequences. Collaboration ensures that regulatory frameworks are designed to encourage innovation while safeguarding against misuse. It also ensures that AI aligns with societal values.

Crafting Inclusive and Effective Regulations

The collaborative approach extends to the crafting of regulations that are inclusive and effective. Industry players possess intimate knowledge of the practical aspects of AI development and deployment. Government bodies understand the legal and regulatory landscape, while academic institutions contribute ethical insights and research findings. Together, they can shape regulations that are practical, enforceable, and well-informed.

Addressing Complex Ethical Dilemmas

Ethical dilemmas surrounding AI development often transcend the expertise of any single stakeholder group. Collaborative efforts allow for a broader exploration of these dilemmas, taking into account the perspectives and experiences of diverse stakeholders. For instance, the implications of bias in AI algorithms demand insights from technologists, ethicists, policymakers, and affected communities. Collaborative discussions enable a more holistic understanding and response to these challenges.

A Pivotal Role in Responsible Development

Collaboration stands as a linchpin for the responsible development of AI technologies. The shared goal of creating AI that benefits humanity while minimising risks requires a unified approach that transcends traditional boundaries. Industry’s drive for innovation, government’s regulatory oversight, and academia’s commitment to research and ethical principles converge. They create a powerful force that guides AI’s evolution in a responsible direction.

Toward a Responsible AI Future: Striking the Balance

As the curtains gradually fall on the podcast’s discourse, the focus converges on the ever-evolving landscape of AI regulations. In these closing moments, Emile Torres resoundingly underscores the urgency of ethical compliance, transparency, and accountability within AI development. While the potential of AI to revolutionise society is undeniably acknowledged, it comes with an essential caveat. A responsible regulatory framework is indispensable in realising the transformative power of AI while ensuring its ethical and equitable application.

Urgency of Ethical Compliance

Emile Torres’s parting words echo the imperative of ethical compliance in AI development. The dazzling capabilities of AI systems must be harnessed within ethical boundaries to safeguard against potential harm. Ensuring AI upholds human rights, avoids discrimination, and prioritises societal well-being is a moral obligation. This transcends technological prowess.

Transparency and Accountability

Transparency is a cornerstone of responsible AI. Torres reiterates that the inner workings of AI systems should be transparent and understandable. It should enable users and regulators to grasp the decision-making processes. In parallel, accountability is a non-negotiable principle. Developers and organisations must be held responsible for the actions and consequences of their AI creations. It will thus ensure that AI is a force for good and not a source of unintended harm.

AI’s Transformative Potential with Responsible Regulations

Emile Torres conveys an optimistic acknowledgment of AI’s potential to revolutionise society across domains. From healthcare and education to manufacturing and transportation, AI’s transformative power is undeniable. However, we can realise this transformation through responsible regulations that guide the development, deployment, and impact of AI technologies.

Balancing Innovation and Ethics

The notion of striking the balance reverberates through Torres’s closing thoughts. The journey to a responsible AI future involves navigating the intricate terrain between innovation and ethics. Advancements in AI hold immense promise, but they also carry potential risks. The AI community includes industry leaders, policymakers, researchers, and society at large. It is their responsibility to ensure that AI’s potential is harnessed while mitigating its downsides.

A Future Shaped by Responsible Regulations

In the closing remarks, the sentiment is clear. A future that embraces the full potential of AI intrinsically ties to the establishment and adherence to responsible regulations. The impact of AI on society, economies, and daily lives is profound. It is thus paramount that these technologies are guided by principles that prioritise human well-being, ethical values, and the greater good.

Conclusion

In conclusion, the podcast underscores the intricate interplay between AI ethics, regulations, and technological progress. The collective responsibility of individuals, organisations, and governments to shape a responsible AI future is emphasised. As we stand at the crossroads of innovation and ethics, today’s decisions will reverberate through the annals of AI history. These decisions will shape the trajectory of technology and its impact on society for years to come.